Coreference Resolution Using spaCy

According to Stanford NLP Group, “Coreference resolution is the task of finding all expressions that refer to the same entity in a text”. You can also read this Wikipedia page.

For example, in the sentence “Tom dropped the glass jar by accident and broke it”, what does “it” refer to? I am sure you will immediately say that “it” refers to “the glass jar”. This is a simple example of coreference resolution.

It can be much more tricky in some cases, but humans usually have no difficulty in resolving coreferences. In today’s article, I want to take a look at the “neuralcoref“ Python library that is integrated into spaCy’s NLP pipeline and hence seamlessly extends spaCy. You may also want to read this article.

Installing the library is simple. Just follow the instructions given here. I have heard that it does not work well some versions of spaCy, but my version of spaCy (ver 2.0.13) had no compatibility issues.

We need to import spaCy and load the relevant coreference model. I chose the “large” model just to be safe.

I wrote two simple functions, one to print all the coreference “mentions” in the document, and another to print the resolved pronoun references.

The full source code is available here.

Let us start with a simple example:

“My sister has a dog and she loves him.”

Let us see how the library resolves the references:

That is good. The pronouns are mapped correctly, as we expect.

Let us extend the above example with another sentence:

“My sister has a dog and she loves him. He is cute.”

No major challenge here. Let us see what the library does:

Great. The references are correct.

In the above examples, we implicitly assumed that the dog is male. What if we use the pronoun “her” instead of “him”, implying the dog is a female?

“My sister has a dog and she loves her.”

This is the output we get in this case:

Clearly, the mapping of “her” to “My sister” is not what we expect. Could it be because of some confusion caused by both objects being females? Let us try a modified example:

“My brother has a dog and he loves her.”

In this case, since “brother” refers to a male, we expect that “her” should be easily resolved to the only other object, “dog”. Let us see:

Strange! Even in this case, the library incorrectly maps “her” to “My brother”. Such errors could be due to the model, or because the machine learning approach does not lead to “understanding” in the way we humans understand text.

Let us try another example:

“Mary and Julie are sisters. They love chocolates.“

In this case, we expect “they” to refer to both “Mary and Julie” and not just one of them. Here is the output:

That is nice. Works as expected. Let us introduce a twist in this pattern:

“John and Mary are neighbours. She admires him because he works hard.“

We know that “John” is male and “Mary” is female, so “She” should map to “Mary” and both “him” and “he” should point to “John”. How does the library handle this?

That is a pleasant surprise! The system resolved the pronouns correctly.

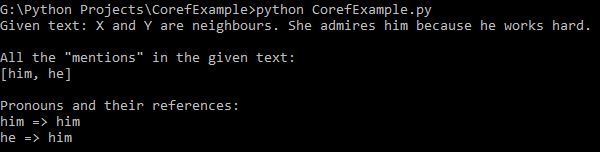

Wait. What if we use abstract names such as “X” and “Y” instead of “John” and “Mary”?

“X and Y are neighbours. She admires him because he works hard.“

This is what the library does in this case:

Let us think for a minute here. How would we, as humans, have handled this case? I feel it is acceptable if “She” maps to “X” and both “him” and “he” map to “Y”, or the other way around. But the way the library has resolved the references stumps me!

Let us try one last example:

“The dog chased the cat. But it escaped.”

This is what we get:

I think we, as humans, would have mapped “it” to the “cat” without much effort, because we understand the context. Erroneous mappings from the system appear unavoidable, given that the system does not “understand” the meaning of the sentence. As I mentioned earlier in the article, Coreference resolution is a complex task and I expect that neuralcoref library and other similar systems will become better in due course.

In case you haven’t noticed, all the examples I have considered for this article involve one type of coreference called “anaphora”. There are other types, which can be even more difficult to handle.

It would be interesting to compare the performance of other libraries such as OpenNLP and Stanford Parser on the same set of examples. Well, that is for another article.

You can download my Python code from here. Have a nice weekend!